If the CloudBees High Availability (HA) Plugin is not working as expected, use this page to troubleshoot.

Versions

Ensure that:

-

All instances must run the same CloudBees CI (or Jenkins) version

-

All instances must run using the same JDK version

Prerequisites

Verify that both yours nodes have the following configuration:

-

JAVA_OPTScontains-Dhudson.TcpSlaveAgentListener.hostName=<HOSTNAME>. Note that if the nodes and/or JNLP agents are in different networks, then the FQDN is needed (If there is not Public Domain Name, use a static IP instead). -

JENKINS_ARGScontains--webroot=$LOCAL_FILESYSTEM/warand--pluginroot=$LOCAL_FILESYSTEM/plugins.

Required data for troubleshooting

This section describes how to collect the following minimum required information for troubleshooting HA issues.

-

Network infrastructure description

-

Support bundle of the instance in HA

-

JGroups customization (optional)

-

Output from Troubleshooting application

-

Logs from Active and Secondary node

| If the required data is bigger than 20 MB, refer to Generating a support bundle - Sending a support bundle too large for Zendesk. |

Network infrastructure description

A brief description of your network infrastructure including:

-

Is there any firewall (or any other interposed network device) in the middle of both nodes? If so, you need a customized

jgroups.xmlto used fixed ports. For more information,see Customize JGroups to use fixed ports if running behind a firewall. -

Do you have several network interfaces on those instances?

Support bundle

A support bundle from the Jenkins instance while the issue is exposed. Refer to Generating a support bundle for instructions.

JGroups customization (optional)

Default JGroups will pick up random ports unless you configure jgroups.xml to use fixed ports.

If you have configure jgroups.xml, please attach to the ticket.

Output from Troubleshooting application

To simplify the troubleshooting process of the network issues, CloudBees has published the troubleshooter program. This program runs the same lower level stack as Jenkins HA and therefore exercises the network in the exact same fashion. When you type text from stdin and hit Enter, you should see the text echoed on all nodes of the cluster (including the node in which you typed the text).

A good first step to diagnose the network problem is to run two instances of the troubleshooter program on the same host and see if they can communicate with each other. Then do the same on the other host so that you can further isolate the problem.

Two options:

Non-Logging mode

Run the following command on both instances to determinate if primary and backup nodes are selected correctly. You need to go to both instances and run the following command (change $JENKINS_HOME to the corresponding value):

java -DJENKINS_HOME=$JENKINS_HOME -DHA_JGROUPS_DIR=$JENKINS_HOME/jgroups/ -Djgroups.bind_addr=<IP_ADDRESS> -Djava.net.preferIPv4Stack=true -jar troubleshooter-<VERSION>-jar-with-dependencies.jar

Logging mode

In case the promotion process does not work correctly, such as both nodes run as primary node, run the troubleshooter application on logging mode to expose the problem. (Change $JENKINS_HOME to the corresponding value.)

java -DJENKINS_HOME=$JENKINS_HOME -DHA_JGROUPS_DIR=$JENKINS_HOME/jgroups/ -Djgroups.bind_addr=<IP_ADDRESS> -Dlogging.org.jgroups=ALL -Dlogging.com.cloudbees.jenkins.ha=ALL -Djava.net.preferIPv4Stack=true -Dha-troubleshooter.filelogging -jar troubleshooter-<VERSION>-jar-with-dependencies.jar

Note about file logging

The -Dha-troubleshooter.filelogging enables file logging with log rotation. By default, it rotates at 100 MB. The use case is to be able to let it run in background while waiting for the issue to reoccur.

If you need to cover a bigger period of time, you may want to also use -Dha-troubleshooter.filelogging.count=NN to raise the default value of 10. For example, to cover a whole weekend duration, you may want to use -Dha-troubleshooter.filelogging.count=100 and rotate on 100 files of 10 MB, to consume a maximum of 1 GB of disk space.

When the tool starts, it will display the values for all those configuration so that you can make sure it was taken in account. Something like:

Logs File Rotation enabled: # of files: 10, max size per file: 10000000, pattern: ha-troubleshooting.abcd.%u.log

The tool generates a random four hexa digits in the file name to avoid clashing with existing one, when for example running the tool on many nodes of a HA cluster.

Operations center and client controller nodes do not form a cluster

See the JGroups troubleshooting guide for typical problems. When nodes don’t form a cluster, it is normally either because the protocol needs additional configuration or there’s a problem in the network configuration of the operating system or the network equipment (for example, nodes cannot “see” one another via TCP).

Ensure that all the instances are using the same Unix user:group

Ensure that the userID used to run the Jenkins process is the same on the 3 of the servers: NFS, Jenkins primary, and Jenkins failover.

Run on the three instances the following commands and ensure that the ID used is the same for the user. For this example, jenkins:jenkins is used.

id -u jenkins

In case the ID is not the same on the three instances, the following Unix commands can be used to create a jenkins:jenkins - which is needed in the NFS instance and to modify the ID of the user and group.

sudo useradd jenkins sudo usermod -u [jenkins UID] jenkins sudo groupadd jenkins sudo groupmod -g [jenkins GID] jenkins

Ensure that the owner of the $JENKINS_HOME is the user which run the Jenkins process

The user which owns the Jenkins process should be the owner of the $JENKINS_HOME. Run ls -la $JENKINS_HOME to check this. If you need to change the owner, the following command can be used.

sudo chown -R jenkins:jenkins $JENKINS_HOME

Customize JGroups to use fixed ports if running behind a firewall

By default, the CloudBees HA plugin uses a random port for communication. If the instances are running behind a firewall, you have two options to configure the fixed ports.

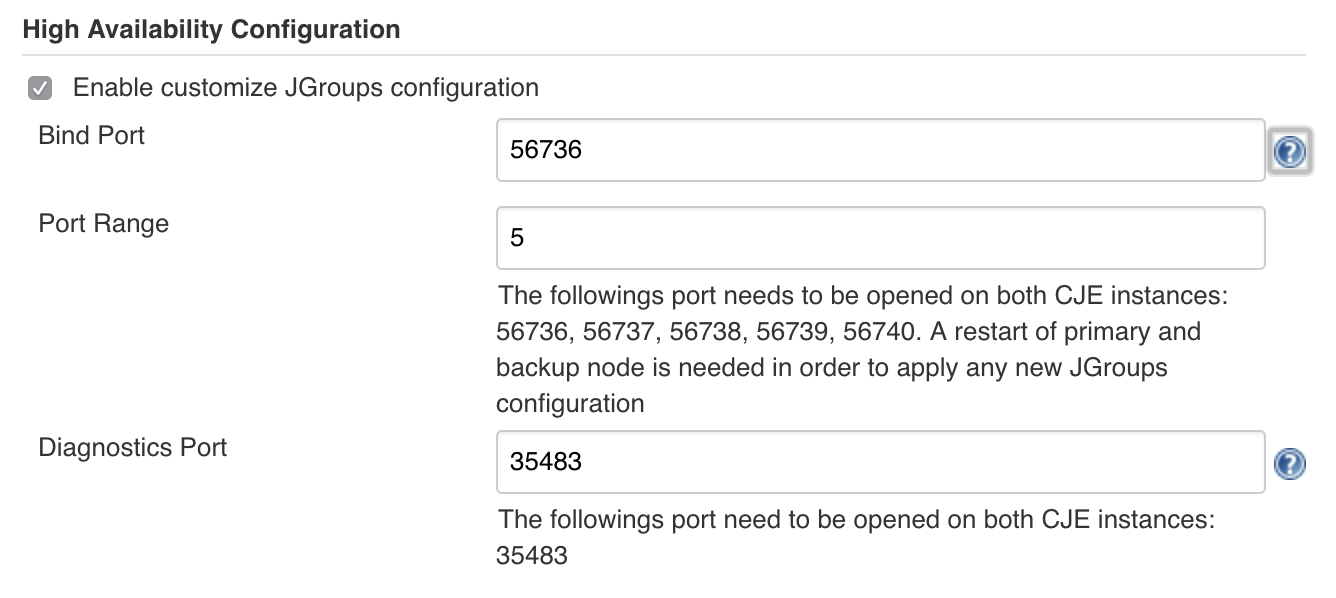

The following examples mean that you need to open the following ports on the firewall: 56736, 56737, 56738, 56739, 56740 and 35483.

From the UI

Starting from CloudBees High Availability 4.8 it is possible to configure the ports via the UI. Go to , and locate the High Availability Configuration section. Enable the customization, specify the ports and restart both nodes in HA singleton.

Bind JGroups with the right network interface

If any of the two instances used to form a cluster has more than one network interface, you must tell JGroups which one should be used to listen for packets. The following two Java arguments are used for this purpose:

-Djgroups.bind_addr=<IP_ADDRESS> -Djava.net.preferIPv4Stack=true

where <IP_ADDRESS> is the IP address of the network interface in the instance which should be able to reach out the other node in the cluster.

| In case of any issue with the CloudBees High Availability plugin, these arguments should be set up to ensure JGroups is bound to the right interface. |

If both nodes are failing are being running as a singleton mode

There is a miscommunication between the nodes of the JGroup cluster, thus a similar trace like the following is being shown in both nodes

------------------------------------------------------------------- GMS: address=stg-rbl-jnk-mas-w2a-a-43979, cluster=Jenkins, physical address=10.65.45.107:38926 ------------------------------------------------------------------- Oct 10, 2018 5:09:08 AM org.jgroups.protocols.pbcast.ClientGmsImpl joinInternal WARNING: stg-rbl-jnk-mas-w2a-a-43979: JOIN(stg-rbl-jnk-mas-w2a-a-43979) sent to stg-rbl-jnk-mas-w2a-a-18558 timed out (after 3000 ms), on try 1 Oct 10, 2018 5:09:11 AM org.jgroups.protocols.pbcast.ClientGmsImpl joinInternal WARNING: stg-rbl-jnk-mas-w2a-a-43979: JOIN(stg-rbl-jnk-mas-w2a-a-43979) sent to stg-rbl-jnk-mas-w2a-a-18558 timed out (after 3000 ms), on try 2 Oct 10, 2018 5:09:14 AM org.jgroups.protocols.pbcast.ClientGmsImpl joinInternal WARNING: stg-rbl-jnk-mas-w2a-a-43979: JOIN(stg-rbl-jnk-mas-w2a-a-43979) sent to stg-rbl-jnk-mas-w2a-a-18558 timed out (after 3000 ms), on try 3 Oct 10, 2018 5:09:17 AM org.jgroups.protocols.pbcast.ClientGmsImpl joinInternal WARNING: stg-rbl-jnk-mas-w2a-a-43979: JOIN(stg-rbl-jnk-mas-w2a-a-43979) sent to stg-rbl-jnk-mas-w2a-a-18558 timed out (after 3000 ms), on try 4 Oct 10, 2018 5:09:20 AM org.jgroups.protocols.pbcast.ClientGmsImpl joinInternal WARNING: stg-rbl-jnk-mas-w2a-a-43979: JOIN(stg-rbl-jnk-mas-w2a-a-43979) sent to stg-rbl-jnk-mas-w2a-a-18558 timed out (after 3000 ms), on try 5 Oct 10, 2018 5:09:20 AM org.jgroups.protocols.pbcast.ClientGmsImpl joinInternal WARNING: stg-rbl-jnk-mas-w2a-a-43979: too many JOIN attempts (5): becoming singleton Oct 10, 2018 5:09:20 AM com.cloudbees.jenkins.ha.singleton.HASingleton$3 viewAccepted INFO: Cluster membership has changed to: [stg-rbl-jnk-mas-w2a-a-43979|0] (1) [stg-rbl-jnk-mas-w2a-a-43979] Oct 10, 2018 5:09:20 AM com.cloudbees.jenkins.ha.singleton.HASingleton$3 viewAccepted INFO: New primary node is JenkinsClusterMemberIdentity[member=stg-rbl-jnk-mas-w2a-a-43979,weight=0,min=0] Oct 10, 2018 5:09:20 AM com.cloudbees.jenkins.ha.singleton.HASingleton reactToPrimarySwitch INFO: Elected as the primary node

Action: Delete all the content inside $JENKINS_HOME/jgroups to reset the JGroups cluster. The content will be regenerated after the next restart of the active and passive nodes.

If the promotion works on the troubleshooter and not in the instances

If the promotion works on the troubleshooter and not in the instances the following experiment is recommended:

-

Ensure that the latest version of the

cloudbees-haplugin is installed on both instances. -

Stop the service of the Jenkins instances.

-

Add the following Java argument

-Dcom.cloudbees.jenkins.ha.level=ALLin both instances so all the JGroups and HA logs are exposed. -

Start one instance.

-

Wait 10 seconds or so.

-

Start the second instance.

-

Check the full logs of Jenkins instances that you can usually find under

/var/log/jenkins.

After the experiment you should remove the argument -Dcom.cloudbees.jenkins.ha.level=ALL you just added on both instances.

Test HA cluster membership

When two nodes that form a cluster lose contact, each node will assume that the other had died, and will take the responsibility as the primary node. This is called a "split brain" problem. This is problematic as you end up having two independently acting operations centers. A similar problem can occur if one node in the cluster is severely stressed under load. The HA Monitor Tool includes a sanity check script which provides users an opportunity to apply some heuristics to reduce the likelihood of this problem.

To test for cluster membership roles, the executable the sanity-check.sh script in $JENKINS_HOME is run before a node assumes the primary role, as well as when there’s a change in the cluster membership. The use case is for you to make sure that the node should really proceed to act as the primary. If the script exits with 0, the node will boot up as the primary node, and if it exists with non-zero, the node will not act as the primary node. In addition to those checks, the sanity script allows the user to check the availability of $JENKINS_HOME, if the load balancer is routable, or if the system load is reasonably low.

HAProxy connectivity issues

If you are having issues with HAProxy connectivity, modify the defaults section of the HAProxy config to troubleshoot with these additional options:

defaults log global # The following log settings are useful for debugging # Tune these for production use option logasap option http-server-close option redispatch option abortonclose option log-health-checks mode http option dontlognull retries 3 maxconn 2000 timeout http-request 10s timeout queue 1m timeout connect 5000 timeout client 50000 timeout server 50000 timeout http-keep-alive 10s timeout check 500 default-server inter 5s downinter 500 rise 1 fall 1