Issue

You have received an error in the Jenkins application which contains Too many open files in the stacktrace.

Example:

Caused by: java.io.IOException: Too many open files at java.io.UnixFileSystem.createFileExclusively(Native Method) at java.io.File.createNewFile(File.java:1006) at java.io.File.createTempFile(File.java:1989)

Or

java.net.SocketException: Too many open files at java.net.PlainSocketImpl.socketAccept(Native Method) at java.net.AbstractPlainSocketImpl.accept(AbstractPlainSocketImpl.java:398)

Resolution

At the user level, check the numerical limit of open files currently allowed. The method for doing this depends on which version and which package format of CloudBees CI/CloudBees Jenkins Platform you are using.

All non-RPM package versions, and RPM versions before 2.289.2.2

RPM package versions of CloudBees CI before 2.289.2.2, and all other package formats, still use SysV-style init scripts, and so the traditional ulimit and limits.conf mechanisms for checking and setting user limits apply.

To see the current limits of your system, run ulimit -a on the command-line as the user running Jenkins. You should see something like this:

core file size (blocks, -c) 0 data seg size (kbytes, -d) unlimited scheduling priority (-e) 30 file size (blocks, -f) unlimited pending signals (-i) 30654 max locked memory (kbytes, -l) unlimited max memory size (kbytes, -m) unlimited open files (-n) 1024 pipe size (512 bytes, -p) 8 POSIX message queues (bytes, -q) 819200 real-time priority (-r) 99 stack size (kbytes, -s) 8192 cpu time (seconds, -t) unlimited max user processes (-u) 1024 virtual memory (kbytes, -v) unlimited file locks (-x) unlimited

To increase limits, add these lines to /etc/security/limits.conf per our Best Practices:

jenkins soft nofile 8192 jenkins hard nofile 16384 jenkins soft nproc 30654 jenkins hard nproc 30654

Note that this assumes jenkins is the user running the Jenkins process. The user varies depending on what service and version you are running:

CloudBees CI controller: cloudbees-core-cm

CloudBees CI Operations Center: cloudbees-core-oc

CloudBees Jenkins Platform controller: jenkins

CloudBees Jenkins Platform Operations Center: jenkins-oc

You can now logout and login and check that the limits are correctly modified with ulimit -a.

Additionally, please take into consideration the fact that depending on your Operating System, you might need to perform some additional changes for these settings to take effect. You might need to check with your Operating System team on the specifics of these changes.

-

Example: If you are running SUSE Linux Enterprise, you might need to verify that inside your

/etc/pam.d/loginfile, you have this line:session required pam_limits.so

Limits are applied when the user logs in: you must restart Jenkins to get the new limits.

RPM package versions from 2.289.2.2 onward

CloudBees began providing systemd unit files, instead of SysV-style init scripts, in our CloudBees CI/CJP RPM packages starting in version 2.289.2.2. Systemd uses its own mechanism to check and set limits, different from the traditional ulimit system. To check the default limits that systemd is using, run systemctl show and look for the DefaultLimitNOFILE and DefaultLimitNOFILESoft values.

To change the limits for your CloudBees CI installation, we recommend creating a drop-in unit file for your customizations, which will override the defaults provided by our packages. By doing this, your changes will persist whenever you install new versions of the packages.

-

Run

sudo systemctl edit cloudbees-core-cm -

Add the following entries to the unit file using your editor, then save and exit:

[Service] LimitNOFILE=8192

Then restart the CloudBees CI service.

On RHEL 7 you may encounter a situation where this does not work, the following methods may be used in this case:

Follow the steps from To set a limit for all services in RedHat Solutions: How to set limits for services in RHEL 7 and systemd.

| This method requires a full system reboot, rather than the typical service restart |

Docker

If Jenkins or a Jenkins agent is running inside a container, you need to increase these limits inside the container. Before Docker 1.6, all containers inherited the ulimits of the docker daemon. Since Docker 1.6, it is possible to configure the user limits to apply to a container.

You can change the daemon default limit to apply it to all containers:

docker -d --default-ulimit nofile=4096:8192

You can also override default values on a specific container:

docker run --name my-jenkins-container --ulimit nofile=4096:8192 -p 8080:8080 my-jenkins-image ...

| By default, linux set the nproc limit to the maximum value. It is possible to set up the nproc to be used by a container but be aware the nproc is a per user value and not a "per container" value. |

More information about Docker ulimits can be found here: https://docs.docker.com/engine/reference/commandline/run/

More information about the Docker daemon configuration can be found here: https://docs.docker.com/engine/reference/commandline/dockerd/

If problems persist

If after setting this you still encounter open file descriptor issues, it is possible there is a file handle leak which is causing this problem to appear eventually despite any fixed limit. To track these down you will need to install the File Leak Detector plugin. More information.

| A JDK will be required for the File Leak Detector plugin to work properly. If you are using a JRE, please install a JDK and restart your Jenkins instance. |

Configure the File Leak Detector Plugin

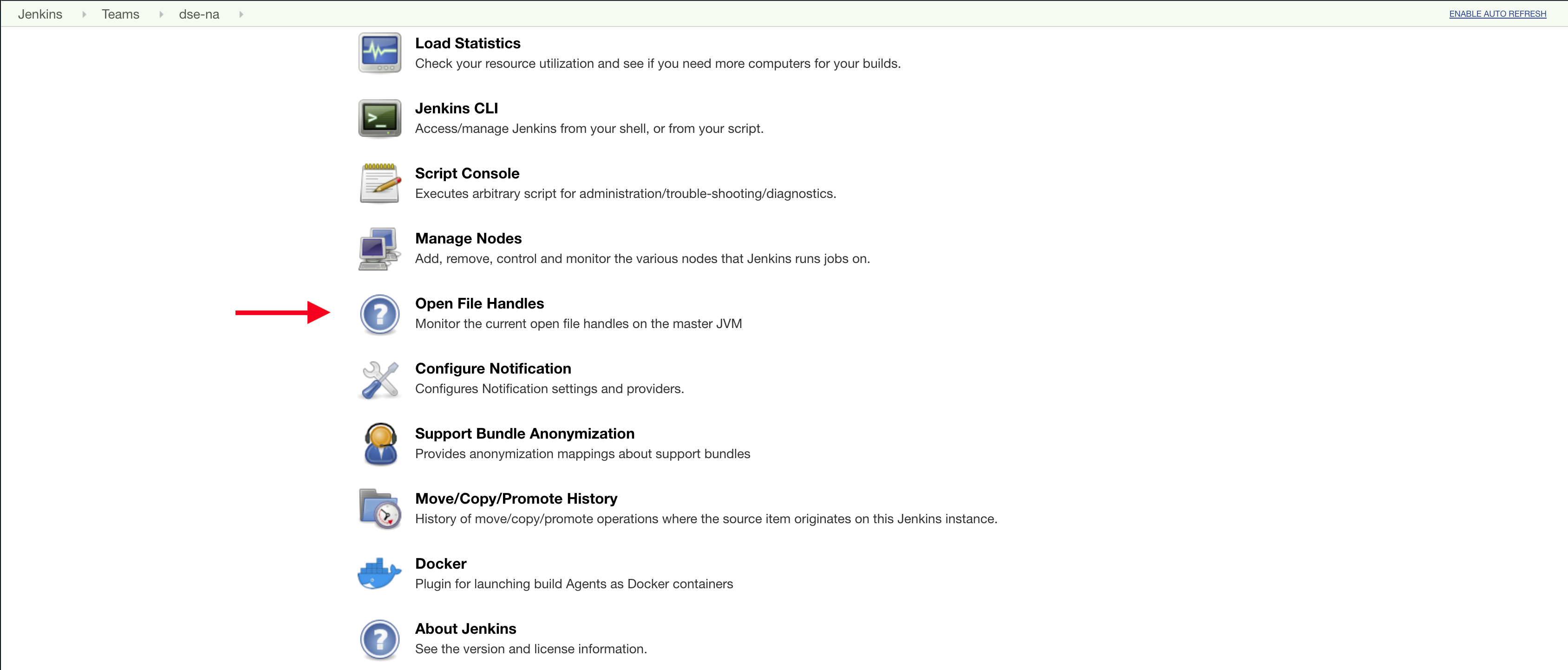

Once you install the File Leak Detector Plugin, you should be able to access it by going to Manage Jenkins>Open File Handles:

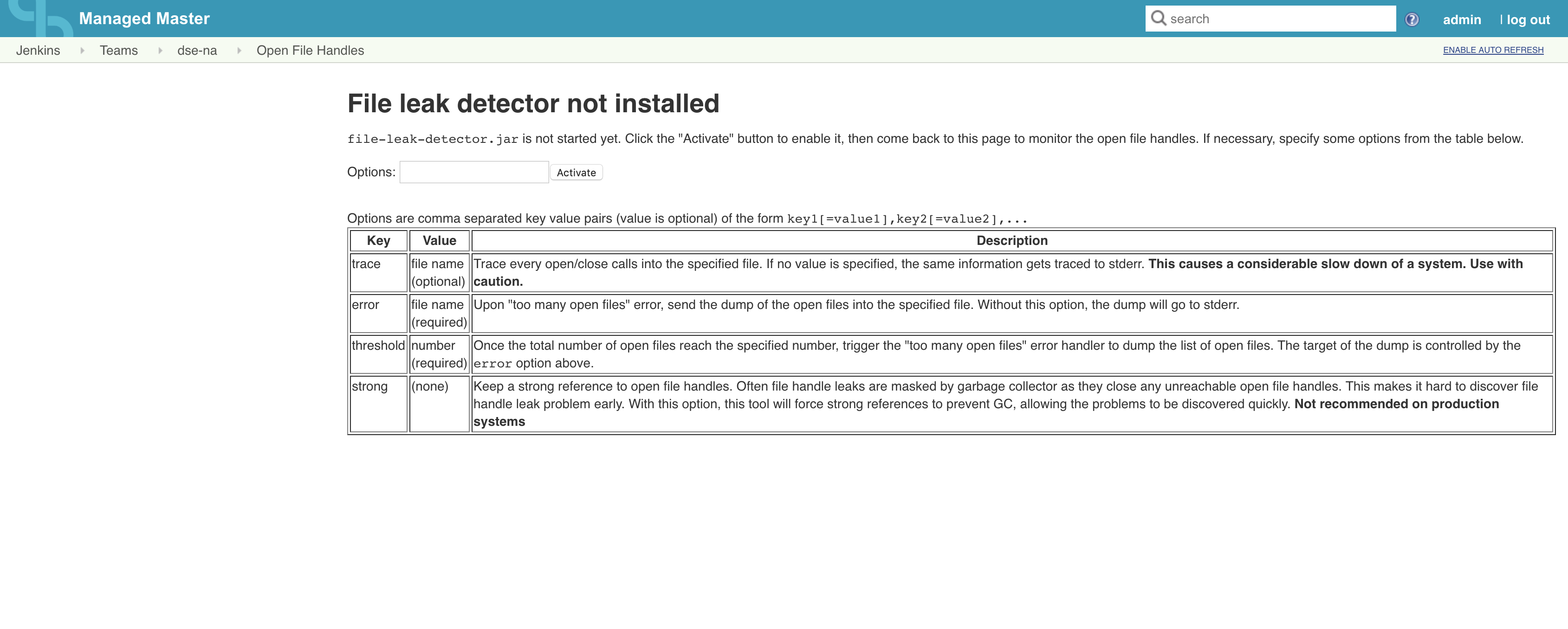

Once you click on Open File Handles you are met with some options:

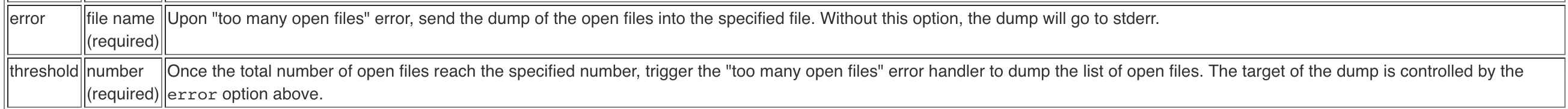

We want to focus on two things here, error and threshold:

Ideally, we want to pass in options like this: error=/tmp/file_leak_detector.txt,threshold=5000 (We would want to use a path that exists on your system)

This means once 5000 open files are reached, it will dump the data to a text file and you can then provide that to our team for investigation. Typically, we investigate anything over 5-7K open files as abnormal.

Use lsof as an alternative

You can use the lsof command on your command line to output a list of currently open files. Running this command as root similar to lsof > losf_yyyymmdd_output.txt will generate a file which can be reviewed in addition (or as an alternative) to the File Leak Detector Plugin data. However, lsof without parameters will list all the open files, hence we recommend getting a PID of the controller process and narrowing down the output like below:

lsof -K i -p <pid>

Parameters explained:

-

-K icauseslsofto ignore java threads. It can be omited if platform does not support it -

-p <pid>instuctslsofto providing listing of files for process specified by process identifier