| High Availability is provided with CloudBees CI starting with version 2.414.2.2 (September 2023). |

This guide provides an overview of the CloudBees CI High Availability feature and shows you how to install CloudBees CI High Availability.

High Availability capabilities and architecture

The CloudBees CI High Availability feature provides:

-

Controller Failover: If a controller fails, Pipeline builds normally run on that controller are automatically triggered or continued by another replica.

-

Rolling restart with zero downtime for CloudBees CI on modern cloud platforms: If a controller replica is restarted, all the replicas keep running, and the user experiences no downtime.

-

Load balancing: One logical controller can spread its workload across multiple replicas and keep them in sync.

-

Auto-scaling for CloudBees CI on modern cloud platforms: You can set up managed controllers to increase the number of replicas, depending on the workload. They can upscale when the CPU usage overcomes a threshold and downscale when the conditions return to normal.

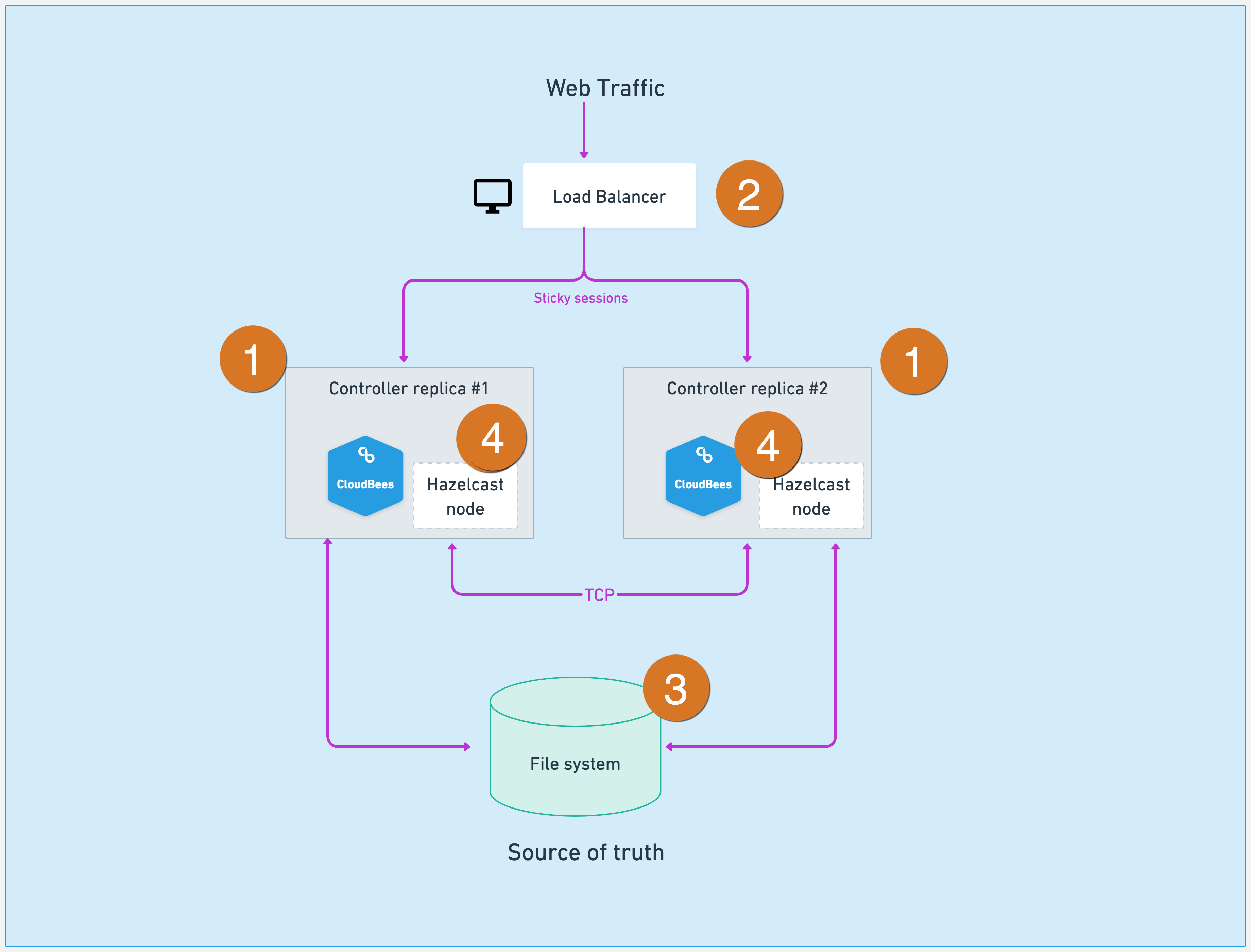

From a high-level perspective, these capabilities are provided using the architecture described in the image below:

-

Controller replicas make High Availability possible.

-

The load balancer spreads the workload between the different controller replicas.

-

A shared file system to persist controller content.

-

Hazelcast keeps the controllers’ live state in sync.

High Availability (active/active) vs. High Availability (active/passive)

Unlike the older active-passive HA system, the mode discussed here is symmetrically active-active.

In the previous High Availability (active/passive), the cluster is not a symmetric cluster where controllers share workloads together. At any given point, only one of the replicas works as a controller. When a failover occurs, one of the replicas takes over the controller role. Users will experience a downtime comparable to rebooting a Jenkins controller in a non-HA setup.

With CloudBees CI High Availability (active/active) described in this guide, all controller replicas are always working, and the controller’s workload is spread between them. When one of the replicas fails, other replicas adopt all of its builds, and the user does not have any downtime.

Horizontal auto-scaling

Once you are running controllers with multiple replicas, you can use Kubernetes Horizontal Pod Autoscaling.

The horizontal pod autoscaling controller monitors resource utilization, and adjusts the scale of its target to match your configuration settings. For example, if utilization exceeds your defined threshold, the autoscaler increases the number of replicas.

CloudBees recommends performance testing to determine appropriate thresholds that do not affect response time.

Details for the upscale events:

-

No rebalance of builds is performed.

-

Builds continue to run on existing replicas.

-

New builds are dispatched randomly between replicas.

-

Due to sticky session usage, any existing session remains on the same replica.

-

New sessions are distributed randomly between replicas.

Details for downscale events:

-

Builds from removed replicas are adopted by remaining replicas.

-

Web sessions associated with a removed replica are redirected to a remaining replica.

Upscaling means there are increased builds to serve that consume greater resources. The scheduling of new controller replicas are blocked when the cluster reaches capacity.

To ensure that you have enough resources, CloudBees recommends the following:

-

Use the Kubernetes cluster autoscaler, to ensure that the cluster has enough resources to accommodate the new replicas.

-

Consider the use of dedicated node pools for controllers.

-

Assign a lower priority class to agent pods, so that controller pods are scheduled first. This allows controller pods to evict agent pods, if necessary.

Install High Availability

You can install High Availability on both CloudBees CI on traditional platforms and CloudBees CI on modern cloud platforms.

In addition to other Kubernetes environments, you can install High Availability on any of the following: Azure Kubernetes Service (AKS), Amazon Elastic Kubernetes Service (EKS), or Google Kubernetes Engine.

Considerations about High Availability (HA)

Nodes and agents

CloudBees supports a range of agent connection modes in High Availability, but each agent must have only one executor. As an agent can connect to only a single replica at a time, agents with multiple executors cannot be properly shared.

You can share a high-capacity computer among several concurrent builds, if desired, by connecting multiple agents to the replicated controller.

Running builds

CloudBees has tested a range of builds and triggers in High Availability mode to clarify use cases for CloudBees CI High Availability. Pipeline projects are the focus of using High Availability mode, and are thus expected to work without limitations. Some exceptions are documented below.

Shared libraries

To use Groovy libraries, CloudBees recommends that you set them to a new “clone” mode and configure Git to use a shallow clone.

For concurrent access, if you update library checkouts in a common directory (such as $JENKINS_HOME/workspace/) or use the caching system, it can cause problems. An administrative monitor guides you to make these changes.

Non-Pipeline projects

Problems can arise in High Availability mode with non-Pipeline project types, such as freestyle, matrix, or Maven. When these project types are run, other replicas can load completed build metadata, but cannot take over the build successfully. As a result, if a replica terminates, any builds running on it are immediately aborted.

Stage View and Blue Ocean plugins

The Stage View and Blue Ocean plugins may not display accurately running builds that are owned by other replicas.

| CloudBees recommends the CloudBees Pipeline Explorer Plugin. |

Hibernation of managed controllers

Hibernation and High Availability are two mutually exclusive modes for managed controllers. If you want to use hibernation for managed controllers, then you cannot use High Availability mode for it.

Horizontal scalability

The benefit of having multiple replicas should always be balanced against the associated cost according to your business case. Scaling horizontally with many replicas will have diminishing returns as the number of replicas increases.

Plugin installation and HA

Plugins can be managed and installed from the screen. When you use the UI to install a plugin, you can choose to complete the plugin installation without restarting the controller. In that situation, if the controller is running in HA mode, the plugin is only loaded in the replica used for the installation process. You must restart the controller to make the plugin available to all of the replicas.

This does not happen if you select the option to install the plugin after a restart or if you perform a plugin upgrade, which always requires a restart. In those cases, after the restart, the plugin is loaded in all of the controller replicas.