High Availability installation instructions overview

You can install High Availability on any Kubernetes cluster. The following table contains links to the specific platform installation instructions and the installation pre-requirements for each one of them.

| Platform | General install guide | Pre-installation requirements |

|---|---|---|

Azure Kubernetes Service (AKS) |

||

Amazon Elastic Kubernetes Service (EKS) |

||

Google Kubernetes Engine (GKE) |

||

Kubernetes (Kubernetes) |

|

Storage requirements

There are specific storage requirements to use HA (active/active). Please review the specific pre-installation requirements for your platform. |

High Availability in a CloudBees CI on modern cloud platforms

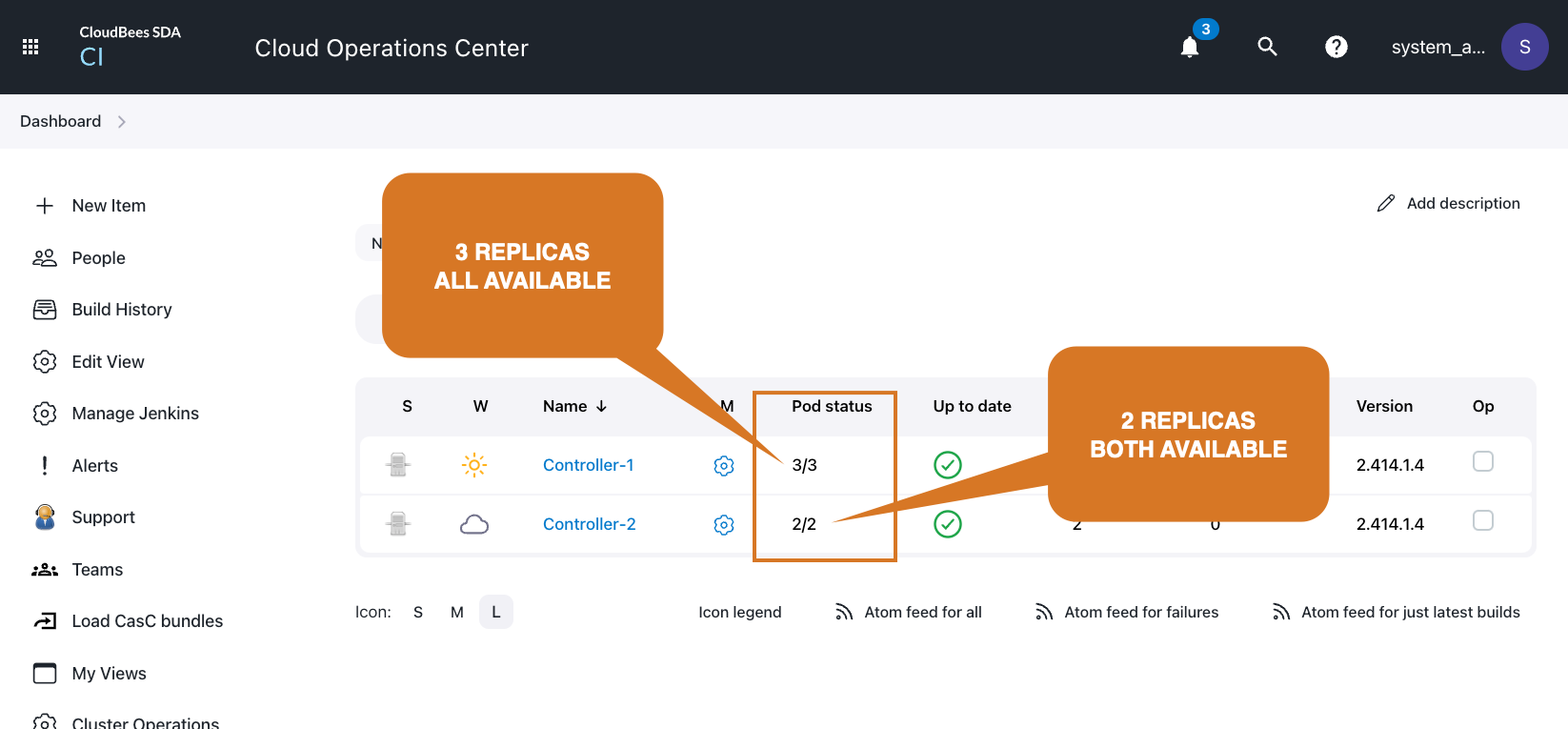

When a managed controller is running in High Availability mode, the Pod status column appears on the Operation center dashboard. This columns displays how many controller replicas exist for each one of the managed controllers, and how many of them are available.

Start a managed controller in High Availability mode using the UI

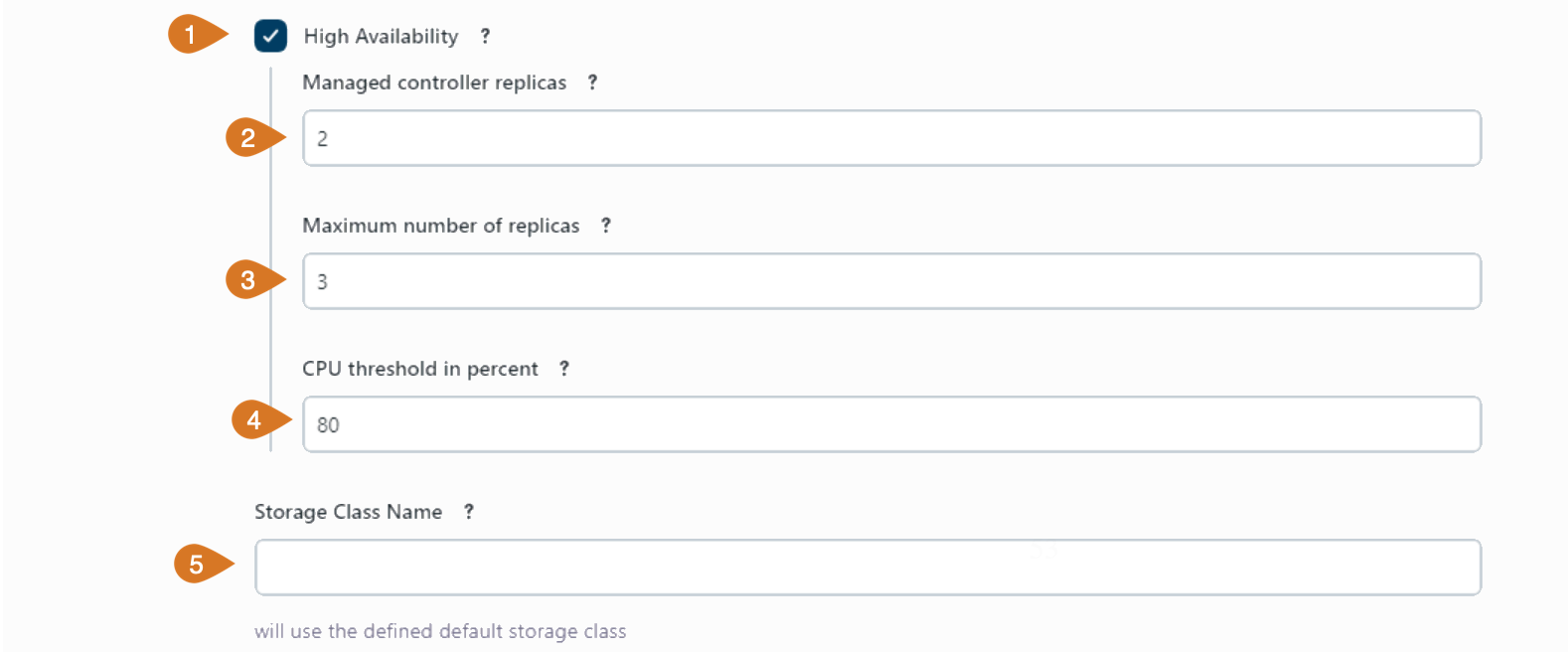

Admin users can easily create managed controllers that run in High Availability mode by using the UI. Create or configure the managed controller as usual and in the configuration screen as shown in the following example:

-

Enable the High Availability mode.

-

Enter the number of desired replicas in the Managed controller replicas field.

-

Enter the Maximum number of replicas if you want auto-scaling. Set it to zero to disable autoscaling.

-

Set the CPU threshold in percent to trigger upscaling or downscaling if auto-scaling has been configured.

-

And select the Storage Class Name for the shared file system for the replicas. If it is empty, use the default storage class defined in your Kubernetes cluster.

Migrate an existing managed controller controller to High Availability (HA)

High Availability (HA) in CloudBees CI on modern cloud platforms requires a storage class with ReadWriteMany access mode. If you plan to run a managed controller in High Availability (HA) mode and this managed controller is not already using a ReadWriteMany class you must perform a migration.

The $JENKINS_HOME directory can contain a huge amount of files (many of them small files), and migrating it to a new storage class can take a lot of time. It’s a very demanding I/O process that can reduce and impact the controller performance and, at some point, you will need to stop and restart the managed controller with the new configuration.

To minimize the outage window during the migration follow these steps:

-

Create a volume using the new storage class.

-

Import a snapshot of the existing volume into the new volume.

-

Stop the controller.

-

Sync the latest changes from the source volume to the new volume.

-

Rename the existing volume claims. The binding between a controller and its volume claim is name based.

-

Update controller configuration to enable HA.

-

Start the controller, backed by the new volume.

Required privileges

The following procedures require a number of privileges in your Kubernetes/OpenShift cluster.

-

Job/{create,delete,get,list} -

PersistentVolumeClaim/{create,delete,get,list} -

PersistentVolume/{create,patch,delete,get,list}

Please ensure you have the corresponding privileges before proceeding to the next steps. Also ensure that the RWX storage class has been created and tested with a sample application.

Cleaning up the source controller

Prior to starting the migration, consider the following steps to reduce the migration time.

-

Discard fingerprints if you don’t actively require them

-

Discard old builds or any build that is not required for migration.

-

…

Take a volume snapshot of the source controller

When running on a Cloud provider, most of the time it provides a way to take a snapshot of a live volume backed by a block storage.

Please refer to your cloud provider documentation for explicit details on how to do this.

Create a volume from the latest snapshot

Using your cloud provider tools, you can create a new volume from an existing snapshot. It should be created in the same availability zone as the original volume, to ensure it can be mounted from within the cluster. Write down the volume id somewhere.

Write down the controller domain so that it can be used in the following scripts

export DOMAIN=`<domain>`

kubectl get pv $(kubectl get "pvc/jenkins-home-${DOMAIN}-0" -o go-template={{.spec.volumeName}}) -o yaml > pv-backup-jenkins-home-${DOMAIN}-0.yaml (1)

Edit pv-backup-jenkins-home-$DOMAIN-0.yaml as follow

-

Remove

.metadata -

Add

.metadata.name, set it for example tobackup-jenkins-home-$DOMAIN-0. -

Remove

.spec.claimRef -

Remove

.status -

Edit

.specto update the volume id reference to the volume id you noted earlier. The exact field varies depending on the cloud provider. For example when using gce persistent disks, it is.spec.gcePersistentDisk.pdName.

Here is a sample volume that applies to a GCE disk as reference.

apiVersion: v1 kind: PersistentVolume metadata: labels: failure-domain.beta.kubernetes.io/region: us-east1 failure-domain.beta.kubernetes.io/zone: us-east1-b name: backup-jenkins-home-$DOMAIN-0 spec: accessModes: - ReadWriteOnce capacity: storage: 100Gi gcePersistentDisk: fsType: ext4 pdName: backup-volume nodeAffinity: required: nodeSelectorTerms: - matchExpressions: - key: failure-domain.beta.kubernetes.io/zone operator: In values: - us-east1-b - key: failure-domain.beta.kubernetes.io/region operator: In values: - us-east1 persistentVolumeReclaimPolicy: Delete storageClassName: my-source-storage-class volumeMode: Filesystem

-

Create the new persistent volume with

kubectl create -f pv-backup-jenkins-home-${DOMAIN}-0.yaml

Create a new persistent volume claim referencing the new persistent volume

kubectl get "pvc/jenkins-home-${DOMAIN}-0" -o yaml > pvc-backup-jenkins-home-${DOMAIN}-0.yaml (1)

Edit pvc-backup-jenkins-home-${DOMAIN}-0.yaml as follow

-

Remove

.metadata -

Add

.metadata.name, set it for example tobackup-jenkins-home-${DOMAIN}-0 -

Edit

.spec.volumeNameto point to the persistent volume name you created just above (backup-jenkins-home-${DOMAIN}-0unless you changed it to something else) -

Remove

.status

Here is a sample persistent volume claim applying to the previously referenced persistent volume.

apiVersion: v1 kind: PersistentVolumeClaim metadata: name: backup-jenkins-home-${DOMAIN}-0 spec: accessModes: - ReadWriteOnce resources: requests: storage: 10Gi storageClassName: <storage-class> volumeMode: Filesystem volumeName: backup-jenkins-home-${DOMAIN}-0

| 1 | Replace <storage-class> with the name of the storage class. |

kubectl create -f pvc-backup-jenkins-home-${DOMAIN}-0.yaml

Nowadays, most storage classes use VolumeBindingMode = WaitForFirstConsumer which means that to bind the persistent volume claim we need to create a pod using it.

-

Create a pod referencing the PVC you just created

Create allocate-backup-jenkins-home-${DOMAIN}-0.yaml as follow

cat > allocate-backup-jenkins-home-${DOMAIN}-0.yaml <<EOF apiVersion: batch/v1 kind: Job metadata: name: allocate-backup-jenkins-home-${DOMAIN}-0 spec: template: spec: volumes: - name: volume persistentVolumeClaim: claimName: backup-jenkins-home-${DOMAIN}-0 containers: - name: busybox image: busybox command: ["true"] volumeMounts: - mountPath: /var/volume name: volume resources: limits: cpu: 100m memory: 100Mi requests: cpu: 100m memory: 100Mi restartPolicy: Never backoffLimit: 4 EOF

Create the job using kubectl create -f allocate-backup-jenkins-home-${DOMAIN}-0.yaml

Then wait for the job to be running

kubectl wait --for=condition=complete job/allocate-backup-jenkins-home-${DOMAIN}-0

You can then delete the job.

kubectl delete job/allocate-backup-jenkins-home-${DOMAIN}-0

Create a volume using the new storage class

Create rwx-jenkins-home-${DOMAIN}-0.yaml as follow

cat > rwx-jenkins-home-${DOMAIN}-0.yaml <<EOF apiVersion: v1 kind: PersistentVolumeClaim metadata: name: rwx-jenkins-home-<domain> spec: storageClassName: <your-new-storage-class> accessModes: - ReadWriteMany resources: requests: storage: 2560Gi # Change this to whatever your storage class requires, or to your needs EOF

We can tweak the previous job to allocate the volume.

Create allocate-rwx-jenkins-home-${DOMAIN}-0.yaml as follow

cat > allocate-rwx-jenkins-home-${DOMAIN}-0.yaml <<EOF apiVersion: batch/v1 kind: Job metadata: name: allocate-rwx-jenkins-home-<domain> spec: template: spec: volumes: - name: volume persistentVolumeClaim: claimName: rwx-jenkins-home-${DOMAIN}-0 containers: - name: busybox image: busybox command: ["true"] volumeMounts: - mountPath: /var/volume name: volume resources: limits: cpu: 100m memory: 100Mi requests: cpu: 100m memory: 100Mi restartPolicy: Never backoffLimit: 4 EOF

Then wait for the job to be running

kubectl wait --for=condition=complete job/allocate-rwx-jenkins-home-${DOMAIN}-0

Initial sync: synchronize snapshot to new volume

Apply the migration script above to synchronize the backup volume and the new volume.

Create the script pvc-sync.sh as follow.

#!/bin/bash set -euo pipefail if [ $# -lt 2 ]; then echo "Usage: $0 <source_pvc> <dest_pvc>" echo "Example: $0 backup-source-pvc new-volume-rwx" exit 1 fi source_pvc="${1:?}" dest_pvc="${2:?}" if ! kubectl get "pvc/$source_pvc" -o name > /dev/null 2>&1; then echo "PVC $source_pvc does not exist." exit 1 fi if ! kubectl get "pvc/$dest_pvc" -o name > /dev/null 2>&1; then echo "PVC $dest_pvc does not exist." exit 1 fi if [ "$source_pvc" == "$dest_pvc" ]; then echo "Source and destination PVC must be different." exit 1 fi echo "1. Migration step" kubectl apply -f - <<JOB apiVersion: batch/v1 kind: Job metadata: name: migration spec: template: metadata: annotations: cluster-autoscaler.kubernetes.io/safe-to-evict: "false" spec: volumes: - name: volume1 persistentVolumeClaim: claimName: ${source_pvc} - name: volume2 persistentVolumeClaim: claimName: ${dest_pvc} containers: - name: migration image: registry.access.redhat.com/ubi8/ubi command: [sh] args: [-c, "dnf install -y rsync; rsync -avvu --delete /var/volume1/ /var/volume2"] volumeMounts: - mountPath: /var/volume1 name: volume1 - mountPath: /var/volume2 name: volume2 resources: limits: cpu: "2" memory: 4G requests: cpu: "2" memory: 4G restartPolicy: Never backoffLimit: 4 JOB echo "Waiting for migration to complete" echo "You can inspect progress using kubectl logs -f job/migration" kubectl wait --for=condition=complete --timeout=900m job/migration echo "== Data from $source_pvc has been copied over to $dest_pvc" kubectl delete job migration

Make it executable with chmod +x pvc-sync.sh.

Then run pvc-sync.sh backup-jenkins-home-${DOMAIN}-0 rwx-jenkins-home-${DOMAIN}-0 to start the initial synchronization.

Depending on the volume size, this can take a lot of time (multiple hours).

Once completed, you will need to ensure that ownership of the root directory of the new volume

will match with the expected uid/gid your controller is running as (1000/1000 on Kubernetes,

will differ on OpenShift, check your actual setup before applying this).

After obtaining a shell,

chown 1000:1000 /var/volume

Rename source volume

Create a script rename_pvc.sh with the following content

#!/bin/bash set -euo pipefail if [ $# -ne 1 ]; then echo "Usage: $0 <domain>" echo "Example: $0 mc" exit 1 fi domain="${1:?}" source_pvc="jenkins-home-$domain-0" dest_pvc="old-jenkins-home-$domain-0" if ! kubectl get "pvc/$source_pvc" -o name > /dev/null 2>&1; then echo "PVC $source_pvc does not exist." exit 1 fi if kubectl get "pvc/$dest_pvc" -o name > /dev/null 2>&1; then echo "PVC $dest_pvc already exists. It will be replaced by persistent volume of $source_pvc." read -p "Are you sure? " -n 1 -r # Delete PVC-1, keep PV as backup pv1_name=$(kubectl get "pvc/${dest_pvc}" -o go-template={{.spec.volumeName}}) echo "$pv1_name" > old_pv echo "== ${dest_pvc} points to pv/${pv1_name}" kubectl patch pv ${pv1_name} -p '{"spec": {"persistentVolumeReclaimPolicy": "Retain"}}' echo "Deleting ${dest_pvc}, we keep PV ${pv1_name} around" kubectl delete pvc/${dest_pvc} #kubectl patch pv ${pv1_name} -p '{"spec":{"claimRef": null}}' fi mkdir generated # Rename pvc-2 to pvc-1 # Change PV RetainPolicy to "Retain" pv_name=$(kubectl get "pvc/${source_pvc}" -o go-template={{.spec.volumeName}}) echo "== ${source_pvc} points to pv/${pv_name}" kubectl get "pvc/${source_pvc}" -o yaml > generated/source_pvc.yaml kubectl patch pv ${pv_name} -p '{"spec": {"persistentVolumeReclaimPolicy": "Retain"}}' kubectl delete "pvc/${source_pvc}" kubectl patch pv ${pv_name} -p '{"spec":{"claimRef": null}}' # We ideally want to retain any user annotation cat <<EOF >generated/patch.yaml - op: replace path: /metadata/name value: ${dest_pvc} - op: replace path: /spec/volumeName value: ${pv_name} - op: remove path: /metadata/finalizers - op: remove path: /metadata/creationTimestamp - op: remove path: /metadata/namespace - op: remove path: /metadata/resourceVersion - op: remove path: /metadata/uid - op: remove path: /status EOF cat <<EOF >generated/kustomization.yaml patches: - target: version: v1 kind: PersistentVolumeClaim name: ${source_pvc} path: patch.yaml resources: - source_pvc.yaml EOF trap "rm -rf generated" EXIT kubectl apply -k generated/ pv_name=$(kubectl get pvc/${dest_pvc} -o go-template={{.spec.volumeName}}) echo "== ${dest_pvc} points to pv/${pv_name}" echo "== Resetting ${pv_name} retain policy to Delete" kubectl patch pv ${pv_name} -p '{"spec": {"persistentVolumeReclaimPolicy": "Delete"}}' echo "== You can rename ${dest_pvc} to ${source_pvc} using ./2-rename_pvc.sh ${dest_pvc} ${source_pvc}"

Make it executable with chmod +x rename_pvc.sh.

Then run rename_pvc.sh jenkins-home-$DOMAIN-0 old-jenkins-home-$DOMAIN-0.

Delta sync: synchronize source volume to new volume

This is the same as the initial sync, except that now the source pvc is not the backup, but the PVC with the live data as it will contain all the latest changes.

This delta sync will take a fraction of the time of the initial sync, however it can still be lengthy depending on the number of files in your filesystem, as rsync will still scan them to determine whether they have changed.

Using the pvc-sync.sh created previously,

run pvc-sync.sh old-jenkins-home-${DOMAIN}-0 rwx-jenkins-home-${DOMAIN}-0.

Edit the controller configuration

| This can be done while the Delta sync migration is ongoing. |

-

Enable High availability. Instead of a

StatefulSet, aDeploymentwill now be used to manage the controller pods. If you have existing YAML customizations, you will need to adjust them to replaceStatefulSetbyDeployment. -

Set to 1 replica (can be increased later)

-

Set Storage Class Name to the new storage class name

-

Edit or set YAML to

apiVersion: "apps/v1" kind: Deployment spec: template: spec: securityContext: fsGroupChangePolicy: OnRootMismatch

Rename new volume to the previous name

Rename the new volume to the previous name, so that the controller will be able to mount it.

Run rename_pvc.sh rwx-jenkins-home-$DOMAIN-0 jenkins-home-$DOMAIN-0.

OUTAGE ENDS HERE - Start the controller on the new volume

In Operations Center, start the managed controller.

Remove the previous volume

Once you’ve confirmed that everything is running fine on the new volume, the previous volume as well as its backup volume can be removed.

kubectl delete pvc backup-jenkins-home-$DOMAIN-0 old-jenkins-home-$DOMAIN-0

In case the persistent volume persistentVolumeReclaimPolicy is not set to Delete, you may also need to clean up the backup volume afterward.

kubectl delete pv backup-jenkins-home-$DOMAIN-0