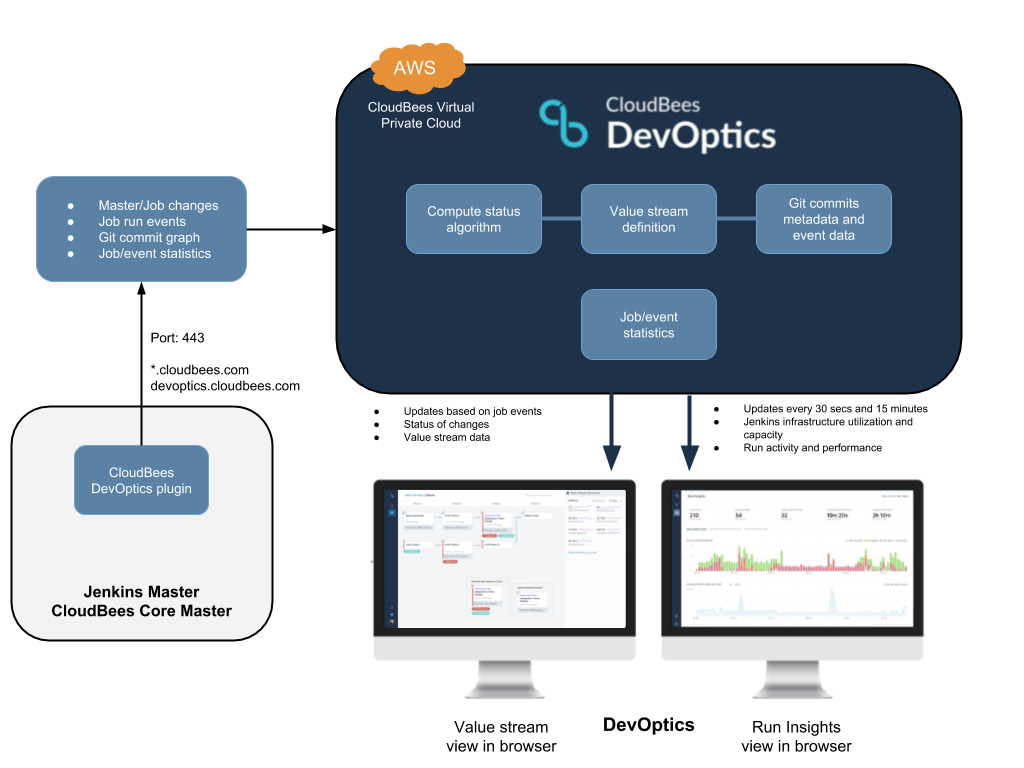

DevOptics has incorporated security right from the beginning. DevOptics collects data via the DevOptics plugins, which are installed on Jenkins masters. Once installed, the plugins send data to the DevOptics service. You then view that data via the DevOptics UI at https://devoptics.cloudbees.com.

CloudBees has a dedicated operations team that manages the DevOptics environment and other environments within the CloudBees infrastructure. The information security team oversees policies and procedures that the operations team performs. Information security policies are written and reviewed, and internal audit and compliance checks are performed periodically against both development and operational infrastructure designs and use.

Production data and infrastructure for DevOptics are housed in a production environment accessible only to operational team members and lead DevOptics product engineers. This is a very small, core group.

DevOptics has been architected to support a multi-tenancy environment that uses record-level isolation so that data in each row is specific to an account in the database. Other customers cannot search or view your data. Customers do not have direct access to their data and can only view their own information via the DevOptics user interface.

For details about CloudBees' data privacy policy and security policy, review the CloudBees Terms of Service.

How the DevOptics service is hosted

The DevOptics service is hosted in a Virtual Private Cloud in Amazon Web Services resources from one of Amazon’s data centers in the United States, currently in Amazon AWS Zone US-East-1. Data is stored in Elasticsearch and encrypted at rest. Communication to the Elasticsearch cluster is over HTTPS, and the ports are only open to the services in the CloudBees Virtual Private Cloud.

How data is encrypted in transit and at rest

All information and requests are transmitted over HTTPS. Data in transit uses Transport Layer Security 1.2 (TLS1.2). Data is also encrypted at rest. We specify current strong cipher suites, and continue to monitor industry trends and best practices via external monitoring to ensure we’re up to date with our cipher suite listing.

Data is scoped at the organization level, and data identifiers are prefixed with an organization’s unique id, created at account creation time.

How data is collected with the DevOptics plugin

After the DevOptics plugin is installed, it sends the following data to the DevOptics service:

-

Master/job changes

-

Job run events

-

Git commit graph metadata

-

Averaged job statistic information

The DevOptics service also stores value stream definitions.

Additional event data is collected for early access customers. Detailed information about the data that is collected will be provided to early access customers as part of the early access documentation.

The plugin makes frequent short outbound HTTPS connections to our hosted service to submit relevant master/job changes, job run events, git commit graph information, and job execution and executor node usage statistics.

All HTTP traffic is outbound. Inbound connections to Jenkins are never required or used. Some data might be cached on a Jenkins instance prior to transmission to the DevOptics cloud, but all Jenkins operations are otherwise read-only and do not interfere with Jenkins operations.

Required port for connection between the DevOptics plugin and the DevOptics service

Jenkins needs to be able to open an HTTPS connection to devoptics.cloudbees.com on port 443, either directly or using an HTTP proxy.

If your Jenkins instance is running within a controlled network that does not permit outgoing connections, do one of the following:

-

Add an exception to your controlled network that permits your Jenkins to open a connection to devoptics.cloudbees.com on port 443.

-

Configure your Jenkins to use an HTTP proxy and provide the details of an HTTP proxy that can open connections to devoptics.cloudbees.com on port 443.

You can manage the HTTP proxy settings for Jenkins from the Manage Jenkins > Manage Plugins > Advanced screen.

| Jenkins also uses these proxy settings to download information about updated plugins and security alerts. If your Jenkins is not able to open connections in general, you will miss these important notifications. |

When changing the proxy configuration, it is recommended that you verify the proxy setting using the verification tool.

At a minimum you should test the proxy settings for https://devoptics.cloudbees.com/. You may also want to test the proxy settings for https://jenkins-updates.cloudbees.com/ if you are running a CloudBees distribution of Jenkins, or for https://updates.jenkins-ci.org/ if you are running the community distribution of Jenkins.

Data sent to the DevOptics service

Connection to the DevOptics service

The plugin verifies that the connection to DevOptics is still available and synchronizes the Jenkins URL with DevOptics every minute.

CD platform monitoring - run insights

The DevOptics plugin publishes specific, targeted statistics about the jobs that are executing and are pending execution. This information does not identify jobs or executions individually. All statistics are aggregated or averaged summaries of the activity on your Jenkins. Each master posts this information summarizing jobs for which it is responsible. This information follows the same rules as the rest of the data sent, such as sent via HTTPS, and is scoped per account.

Value stream management

The value stream definitions are defined by the users and are stored in the corresponding DevOptics account.

The following data is stored per value stream:

-

The name of the value stream.

-

The actual value stream flow definition.

-

Phases, gates, jobs, feeds.

-

Jobs (identified per master).

-

The creation/update time for changes to the value stream.

In future DevOptics releases, additional information may be added to value stream definitions, such as the description of the value stream, the author, or other information.

Value stream definitions and updates are stored in the DevOptics service. They are later retrieved. They are also combined with event and git commit graph information to provide the status of a value stream.

The DevOptics plugin uploads the following sets of data:

-

Master job listings/changes - This is a simple list of job names/ids and display names, per Jenkins master. Changes are periodically uploaded to DevOptics.

-

Job run events - All value stream job activity event payloads sent from Jenkins masters originate from the pubsub-light plugin. These events are enriched with some additional event-specific data needed by DevOptics.

-

Git commit graph commit details - The DevOptics plugin publishes only the Git commit graph metadata, excluding files and their changes. This is the equivalent of “git log” output. This raw stream of commit details (the commit graph) is sent every time a job run executes an SCM checkout. An HTTP header named “Repository” is sent with each request (POST). This header contains an MD5 hash of the repository URL, such as “9b763f4f4f676967ad9f69eefff41b09”.

Automated security scanning practices

CloudBees security teams use SonarQube to perform routine static analysis security scanning of all DevOptics repos (Master branches). Additionally, other scanners such as OWASP ZAP perform periodic daily scans of the staging environment.

How DevOptics repos are scanned

SonarQube is used to scan the front-end JavaScript repo and back-end Java repos.

This is done on each merge to the master branch of the DevOptics repo. The SonarQube quality profiles and gates have been tuned for each project to help separate the signal from the noise.

An engineer on the team regularly triages the dashboard for new bugs and potential vulnerabilities in our services. These are translated into Jira tickets and added to the backlog for minor cases. Critical issues and blockers are assigned a higher priority.

How the DevOptics staging environment is scanned

The Staging environment is scanned daily using the OWASP ZAP tool. A full scan is run against the DevOptics environment in staging.

This full scan includes:

-

A passive baseline scan to spider the DevOptics application and check the resources for different vulnerabilities involving the setup and configuration of the API (for example, information in headers, cache control, application errors, and others). This does not involve running any attack against the application.

-

An active scan that runs actual exploits against staging. This involves running attacks and tests the application for things like SQL Injection, XSS, and other items.

A configuration file is set up to set the status of the pipeline to fail whenever a high-risk vulnerability is discovered. Failures and other issues with running the pipeline notify a Slack channel that is constantly monitored by the team.

An HTML report is generated with the results of the scans, and is archived so that it is accessible from the Jenkins job. Vulnerabilities identified by the tool are added to the backlog, with the appropriate priority.

Verification of connection to the DevOptics service

The following items are verified every minute.

| Data | Description | Example |

|---|---|---|

Instance ID |

The Jenkins instance identifier |

06035cc69ad8eabd2dd58743dd65c7d3 |

Timestamp |

The number of milliseconds since the UNIX epoch |

1547564719124 |

Jenkins URL |

The URL of the Jenkins instance |

/https://jenkins.example.com |

Jenkins version |

The version of Jenkins |

2.249.3 |

Client name |

The name of the DevOptics plugin |

DevOptics |

Client version |

The version of the DevOptics plugin |

1.1561 |

Verification of CD platform monitoring - run insights

Each report that is sent to support run insights includes the following information:

| Data | Description | Example |

|---|---|---|

Instance ID |

The Jenkins instance identifier |

06035cc69ad8eabd2dd58743dd65c7d3 |

Timestamp |

The number of milliseconds since the UNIX epoch |

1547564719124 |

The following updates are made to the current activity bar every 30 seconds:

| Data | Description | Example |

|---|---|---|

Active executors |

The number of executors currently running tasks |

5 |

Idle executors |

The number of executors not currently in use |

3 |

Queue size |

The current size of the build queue |

12 |

Queue time |

The cumulative amount of time spent queuing for all the tasks currently in the build queue |

4532 |

Time to idle |

The best estimate of Jenkins as to how long until all currently running jobs will have completed, if no new jobs are started |

1934 |

The following updates are made every 15 minutes:

| Data | Description | Example |

|---|---|---|

Active executors |

The number of executors currently running tasks |

5 |

Idle executors |

The number of executors not currently in use |

3 |

Queue size |

The current size of the build queue |

12 |

Queue time |

The cumulative amount of time spent queuing for all the tasks currently in the build queue |

4532 |

Time to idle |

The best estimate of Jenkins as to how long until all currently running jobs will have completed, if no new jobs are started |

1934 |

Runs building success |

How long successful builds spent running, broken down into statistical summaries |

S0=3, S1=2356, S2=12455, min=15, max=2014 |

Runs queuing success |

How long successful builds spent queuing, broken down into statistical summaries |

S0=3, S1=2356, S2=12455, min=15, max=2014 |

Runs building unstable |

How long unstable builds spent running, broken down into statistical summaries |

S0=3, S1=2356, S2=12455, min=15, max=2014 |

Runs queuing unstable |

How long unstable builds spent queuing, broken down into statistical summaries |

S0=3, S1=2356, S2=12455, min=15, max=2014 |

Runs building failure |

How long failed builds spent running, broken down into statistical summaries |

S0=3, S1=2356, S2=12455, min=15, max=2014 |

Runs queuing failure |

How long failed builds spent queuing, broken down into statistical summaries |

S0=3, S1=2356, S2=12455, min=15, max=2014 |

Runs building aborted |

How long aborted builds spent running, broken down into statistical summaries |

S0=3, S1=2356, S2=12455, min=15, max=2014 |

Runs queuing aborted |

How long aborted builds spent queuing, broken down into statistical summaries |

S0=3, S1=2356, S2=12455, min=15, max=2014 |

Runs building total |

How long all builds spent running, broken down into statistical summaries |

S0=3, S1=2356, S2=12455, min=15, max=2014 |

Runs queuing total |

How long all builds spent queuing, broken down into statistical summaries |

S0=3, S1=2356, S2=12455, min=15, max=2014 |

Node count |

The number of nodes in the Jenkins master, broken down into statistical summaries |

S0=12, S1=48, S2=192, min=4, max=4 |

Node online |

The number of Nodes in the Jenkins master that are currently on-line, broken down into statistical summaries |

S0=12, S1=42, S2=150, min=3, max=4 |

Per label breakdown |

The label name and then the following metrics for each label:

Each of the metrics is broken down into statistical summaries. |

linux |

Verification of value stream management data

| See https://jenkinsci.github.io/pubsub-light-plugin/org/jenkinsci/plugins/pubsub/EventProps.Jenkins.html for more information about the items in this table. |

The following updates are made to the master job listing and changes:

| Data | Description | Example |

|---|---|---|

instanceID |

Jenkins instance ID |

a32f78eb60e71a1345c5e3942d214ebb |

masterURL |

Jenkins instance URL |

/https://jenkins/acme.com/ |

jobs |

An array of simple job display details |

[ { "name": "production/acme-war/master", "displayName": "production » acme-war » master" } ] |

The following updates are made to job run events every time a job run starts:

| Data | Description | Example |

|---|---|---|

jenkins_event_timestamp |

Event timestamp in milliseconds since 1970 epoch |

1547473635214 |

jenkins_event |

Event name |

job_run_started |

jenkins_event_uuid |

Event unique ID |

bb1df4d8-25a5-443f-b43b-faabb4d13b03 |

jenkins_object_name |

Jenkins domain object name full |

#45 |

jenkins_object_url |

Jenkins domain object URL |

job/production/job/acme-war/master/45/ |

jenkins_instance_id |

Jenkins domain object unique ID |

a32f78eb60e71a1345c5e3942d214ebb |

job_run_queueId |

Job run Queue Id |

91878 |

jenkins_object_id |

Jenkins domain object unique ID |

45 |

jenkins_object_type |

Jenkins domain object type |

org.jenkinsci.plugins.workflow.job.WorkflowRun |

job_name |

Job name |

production/acme-war/master/ |

job_run_queue_start |

Job run queue start time |

1547473635197 |

job_run_status |

Job run status/result |

RUNNING |

jenkins_instance_url |

The URL of the Jenkins instance that published the message |

/https://jenkins/acme.com/ |

The following updates are made every time a job checks out code from a source code management tool:

| Data | Description | Example |

|---|---|---|

jenkins_event_timestamp |

Event timestamp in milliseconds since 1970 epoc |

1547473635214 |

jenkins_event |

Event name |

job_run_scm_checkout |

jenkins_event_uuid |

Event unique ID |

bb1df4d8-25a5-443f-b43b-faabb4d13b03 |

jenkins_object_name |

Jenkins domain object full name |

#45 |

jenkins_object_url |

Jenkins domain object URL |

job/production/job/acme-war/master/45/ |

jenkins_instance_id |

Jenkins domain object unique ID |

a32f78eb60e71a1345c5e3942d214ebb |

job_run_queueId |

Job run Queue Id |

91878 |

jenkins_object_id |

Jenkins domain object unique ID |

45 |

jenkins_object_type |

Jenkins domain object type |

org.jenkinsci.plugins.workflow.job.WorkflowRun |

job_name |

Job name |

production/acme-war/master/ |

job_run_queue_start |

Job run queue start time |

1547473635197 |

job_run_status |

Job run status/result |

RUNNING |

jenkins_instance_url |

The URL of the CI server (Jenkins master) that runs the job |

/https://jenkins1.acme.com |

commit |

SCM head commit ID. This field is deprecated. |

1aef6c05382a968ab993a8b17b6ca06231284bcd |

checkout_commit_data |

JSON object detailing all new commits since this SCM repository was checked out by the last job run to finish successfully. Note that not all commit data is uploaded in the event (currently). Commit comments, authors, and parent-commits are not currently uploaded in the event. These are sent in the Raw commit details stream. |

<JSON object> |

remote_urls |

Repository remote URL |

/https://github.com/acme/cool-app.git |

browser_url_template |

A URL template that can be used to construct browser links to commits in the repo |

/https://github.com/acme/cool-app/commit/{commit} |

The following updates are made every time an artifact is produced or consumed:

| Data | Description | Example |

|---|---|---|

jenkins_event_timestamp |

Event timestamp in milliseconds since 1970 epoch |

1547473635214 |

jenkins_event |

Event name |

artifact |

jenkins_event_uuid |

Event unique ID |

bb1df4d8-25a5-443f-b43b-faabb4d13b03 |

jenkins_object_name |

Jenkins domain object full name |

#45 |

jenkins_object_url |

Jenkins domain object URL |

job/production/job/acme-war/master/45/ |

jenkins_instance_id |

Jenkins domain object unique ID |

a32f78eb60e71a1345c5e3942d214ebb |

job_run_queueId |

Job run Queue Id |

91878 |

jenkins_object_id |

Jenkins domain object unique ID |

45 |

jenkins_object_type |

Jenkins domain object type |

org.jenkinsci.plugins.workflow.job.WorkflowRun |

job_name |

Job name |

production/acme-war/master/ |

job_run_queue_start |

Job run queue start time. |

154747363519 |

job_run_status |

Job run status/result |

RUNNING: When artifact_producer is “false” SUCCESS: When artifact_producer is “true” |

jenkins_instance_url |

The URL of the Jenkins instance that published the message |

/https://jenkins/acme.com/ |

artifact_producer |

Flag indicating whether this is a “produced” or “consumed” artifact |

true/false |

artifact_id |

The artifact ID |

File SHA-512, Docker image ID, Build generated ID |

artifact_type |

The artifact type indicator. Used for artifact categorization. |

“File”, “docker”, “rpm”, “aim”, and so on |

artifact_label |

Human readable artifact label. Only if artifact_producer=true. |

com.acme:shopping-lib:1.3.9::jar |

artifacts_consumed |

JSON object containing a list of artifacts consumed by a run. For example, Maven dependencies. Each entry in the list will contain "artifact_id", "artifact_type" and "artifact_label". |

<JSON object> |

The following updates are made every time a job run ends:

| Data | Description | Example |

|---|---|---|

jenkins_event_timestamp |

Event timestamp in milliseconds since 1970 epoch |

1547473635214 |

jenkins_event |

Event name |

job_run_ended |

jenkins_event_uuid |

Event unique ID |

bb1df4d8-25a5-443f-b43b-faabb4d13b03 |

jenkins_object_name |

Jenkins domain object full name |

#45 |

jenkins_object_url |

Jenkins domain object URL |

job/production/job/acme-war/master/45/ |

jenkins_instance_id |

Jenkins domain object unique ID |

a32f78eb60e71a1345c5e3942d214ebb |

job_run_queueId |

Job run Queue Id |

91878 |

jenkins_object_id |

Jenkins domain object unique ID |

45 |

jenkins_object_type |

Jenkins domain object type |

org.jenkinsci.plugins.workflow.job.WorkflowRun |

job_name |

Job name |

production/acme-war/master/ |

job_run_status |

Job run status/result |

FAILURE |

jenkins_instance_url |

The URL of the Jenkins instance that published the message |

/https://jenkins/acme.com/ |

Verification of git commit graph commit details

Each commit entry in a value stream contains the following:

| Data | Description | Example |

|---|---|---|

sha1 |

Commit SHA |

80d17d5ecd6fc6d8c94138108c5a2959d3691264 |

parents |

An array of parent commits |

[ "78bf6e664398391f625e4383c0fbd6d97ba06669", "a27551af32af9efd7f68b3bc1da7ed0278601741" ] |

author |

Commit author details |

Name, emailAddress, when, tzOffset |

committer |

Commit details |

Name, emailAddress, when, tzOffset |

fullMessage |

The full commit message. Limited to the first 10922 characters. |

Free text |