When an administrator wants to connect an inbound (formerly known as “JNLP”) external agent to a Jenkins controller, such as a Windows virtual machine running outside the cluster and using the agent service wrapper, two connection types are available in CloudBees CI on modern cloud platforms.

| Connection type | Description |

|---|---|

WebSocket for Agent Remoting Connections (available in CloudBees CI on modern cloud platforms version 2.222.2.1 and later) |

This is the recommended method for all new inbound agent connections. It is a substitute for the older method and is simpler, more straightforward, and easier to establish. This feature allows for the agent to call back on the regular HTTP port, using WebSocket to set up a bidirectional channel. Using WebSocket, inbound agents can now be connected much more simply when a reverse proxy is present: if the HTTP(S) port is already serving traffic, most proxies will allow WebSocket connections with no additional configuration. WebSocket for Agent Remoting Connections provides the following benefits:

|

Inbound TCP (formerly known as JNLP) |

With this connection, the controller must expose a non-HTTP port (conventionally 50000) and then wait for an agent pod to call back to it. This can complicate network architectures. For Gateway API deployments, refer to Connect inbound agents using NodePort with Kubernetes Gateway API. |

Connect inbound agents using WebSocket for Agent Remoting Connections

CloudBees CI supports using WebSocket transport to connect inbound agents to controllers. This works for shared agents and clouds, as well.

| This feature is available in CloudBees CI version 2.222.2.1 and later. |

This functionality lets you make a connection via HTTP(S), then holds the connection open to create a bidirectional channel to send data back and forth between controllers and inbound agents.

No special network configuration is needed since the regular HTTP(S) port for your controller is used for all communications.

For additional details about WebSocket support in Jenkins, refer to JEP-222: WebSocket Support for Jenkins Remoting and CLI.

To connect inbound agents:

-

On the Agent Configuration screen, in Launch method, select Launch agent by connecting it to the controller.

-

Select Use WebSocket. Enabling the Use WebSocket option allows the agent to make a connection to the WebSocket.

-

Follow the instructions on the Agent page to connect the agent. For more details, refer to Launching inbound agents.

This content applies only to CloudBees CI on modern cloud platforms.

Connect inbound agents using NodePort with Kubernetes Gateway API

Kubernetes Gateway API manages HTTP/HTTPS traffic through HTTPRoute resources.

While Gateway API defines a TCPRoute resource, using it for inbound agents is not practical because it requires a separate route for each controller.

For inbound agent connections using Remoting over TCP in a Gateway API deployment, use the allowExternalAgents option to create a dedicated Kubernetes NodePort service for each controller.

This applies to all TCP-based remoting paths:

-

Inbound agents connecting to a controller from outside the cluster.

-

Controllers connecting to the operations center without WebSockets (cross-cluster).

-

Operations center connecting to controllers without WebSockets (cross-cluster).

|

CloudBees recommends WebSocket for all new deployments, particularly with Gateway API because it uses the standard HTTP/HTTPS port and requires no additional configuration. For setup instructions, refer to WebSocket for Agent Remoting Connections. NodePort is needed only when WebSocket is not an option (for example, network or firewall policies that prevent WebSocket HTTP upgrade connections). |

Prerequisites

-

A CloudBees CI deployment using Kubernetes Gateway API for ingress routing.

-

The controller configured to allow external agent connections.

Enable external agent connections

To create a NodePort service for inbound TCP agent connections, enable the Allow external agents option in the controller provisioning configuration.

In the Configuration as Code (CasC) jenkins.yaml file, set the following:

allowExternalAgents: true

When this option is enabled:

-

CloudBees CI creates a Kubernetes

Serviceof typeNodePortfor the controller’s agent listener. -

The controller container always listens on port 50000, but the Kubernetes Service port is unique per controller (starting at 50001, incremented by the controller ID).

-

Kubernetes allocates a node port from the cluster’s node port range (default: 30000–32767), exposing the controller’s agent listener on every cluster node.

Configure the agent tunnel

After enabling external agent connections, configure the agent tunnel manually.

The controller does not automatically advertise the NodePort address to agents.

CloudBees CI injects the following environment variables into the controller pod:

-

JNLP_NODE_PORT: The allocatedNodePortport number. -

JNLP_NODE_IP: The IP address of the node where the controller pod is running. -

JNLP_NODE_NAME: The Kubernetes node’s internal hostname. This is typically not resolvable from outside the cluster; use a known external hostname or IP address instead.

Set the Tunnel connection through option for the agent using the external hostname of your cluster (or load balancer) combined with JNLP_NODE_PORT.

-

Option 1: In the CasC

jenkins.yamlfile, set the following:tunnel: "<external-hostname>:${JNLP_NODE_PORT}" (1)1 Replace <external-hostname>with the externally reachable hostname or IP of your cluster nodes (for example, the operations center hostname if traffic can reach theNodePortthrough it). -

Option 2: For a Kubernetes cloud, use the

jenkinsTunnelfield:jenkinsTunnel: "<external-hostname>:${JNLP_NODE_PORT}" (1)1 Replace <external-hostname>with the externally reachable hostname or IP.

Verify the NodePort service

After enabling external agent connections and provisioning the controller, complete the following steps to verify the NodePort service:

-

Run the following command to list services for the controller:

kubectl get svc -n <namespace> -l com.cloudbees.cje.type=master(1)1 Replace <namespace>with the namespace where the controller is deployed. -

In the output, look for a service of type

NodePort. ThePORT(S)column displays the mapping as<service-port>:<node-port>/TCP. The node port (auto-assigned by Kubernetes in the 30000–32767 range) is the externally reachable port and matches theJNLP_NODE_PORTvalue injected into the controller pod. -

To test the connection, run:

curl <node-ip>:<node-port>(1)1 Replace <node-ip>with any cluster node IP and<node-port>with the allocated NodePort.The expected output is similar to:

Jenkins-Agent-Protocols: Diagnostic-Ping, JNLP4-connect, OperationsCenter2, Ping Jenkins-Version: {core-version} Jenkins-Session: f4e6410a Client: <client-ip> Server: <server-ip> Remoting-Minimum-Version: 3.4

|

For High Availability (HA) controllers, TCP agent connections are routed internally between replicas.

No sticky session configuration is needed for |

Configure inbound agents using self-signed certificates

You can configure connections for agents using self-signed certificates or enterprise certificates that are not trusted by the shared agent. To configure inbound agents using self-signed certificates, you must use a Kubernetes deployment with a load balancer that supports websockets or inbound TCP. The self-signed certificate must be trusted by the client.

Complete the following steps:

-

Type the following command to create a TrustStore called

cacerts.jksand import the certificate:keytool -import -v -trustcacerts -alias cbci.example.com -file cbci.example.com-certificate.pem -keystore cacerts.jks -storepass changeit -

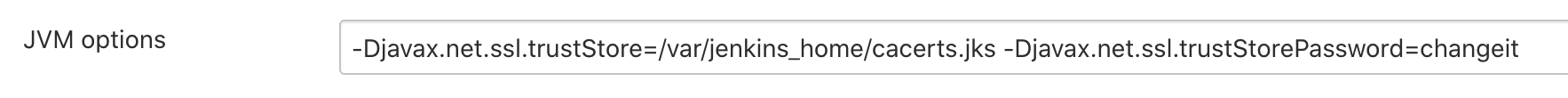

In the operations center, configure the JVM options with the TrustStore and TrustStore password using a location the inbound agent can access:

-

Use the TrustStore when you execute the launch connection from the agent:

java -Djavax.net.ssl.trustStore=/var/jenkins_home/cacerts.jks -Djavax.net.ssl.trustStorePassword=changeit -jar slave.jar -jnlpURL \https://cbci.example.com/cjoc/jnlpSharedSlaves/sharedagent/slave-agent.jnlp -secret xxx

Test the connection for inbound agents

After you have configured the connection for inbound agents, you should confirm that controller is ready to receive external inbound agent requests.

To test the connection for inbound agents:

$ curl $DOMAIN_NAME:$JNLP_MASTER_PORT Jenkins-Agent-Protocols: Diagnostic-Ping, JNLP4-connect, OperationsCenter2, Ping Jenkins-Version: {core-version} Jenkins-Session: f4e6410a Client: 0:0:0:0:0:0:0:1 Server: 0:0:0:0:0:0:0:1 Remoting-Minimum-Version: 3.4

Once the connection is correctly configured in your cloud, you can then create a new 'node' in your controller:

-

Select in the upper-right corner to navigate to the Manage Jenkins page.

-

Select Nodes.

The node should be configured with: - Launch method: 'Launch agent by connecting it to the master'

Port 50000 in replicated managed controllers

On Kubernetes, CloudBees strongly recommends WebSocket transport for inbound agents connecting from outside the cluster.

For NGINX Ingress deployments, you can use the older method of connecting inbound agents with the values.yaml file. In this way, you patch the NGINX Ingress to forward port 50001, 50002, or similar, to the managed controller Services with each controller pod using container port 50000. If the agent cannot resolve the tunnel address, define the Tunnel connection through option by setting the tunnel field in Configuration as Code (CasC) to the ingress hostname; for example, cbci.example.com:50001. This method is not available with Kubernetes Gateway API, which does not support TCP port forwarding.

For all deployments (including Kubernetes Gateway API), you can use the NodePort service created when you select Allow external agents in controller provisioning. Set allowExternalAgents: true in CasC. If an inbound TCP agent connects to the incorrect replica, the connection is automatically proxied. If the agent cannot resolve the tunnel address, define the Tunnel connection through option by setting the tunnel field (or for a Kubernetes cloud, the jenkinsTunnel field) in Jenkins Configuration as Code to ${JNLP_NODE_NAME}:${JNLP_NODE_PORT}. For details, refer to Connect inbound agents using NodePort with Kubernetes Gateway API.

Troubleshoot inbound connections

Kubernetes Gateway API deployments

Gateway API routes HTTP/HTTPS traffic through HTTPRoute resources.

When a controller is started or stopped, the HTTPRoute for that controller is created or removed, which may cause brief connection interruptions depending on your Gateway controller implementation.

For inbound agent connections using NodePort, agent traffic bypasses the Gateway entirely and connects directly to the cluster node.

Connection interruptions are limited to controller pod restarts and Kubernetes node-level events.

CloudBees recommends WebSocket transport for Gateway API deployments, as it uses the standard HTTP/HTTPS port managed by the HTTPRoute and does not require NodePort configuration.

For setup instructions, refer to WebSocket for Agent Remoting Connections.